This white paper examines practical approaches to predicting hardware failure in massive AI training farms. It traces the technical evolution from classical grid computing to modern distributed systems that span edge devices, cloud resources, and on-premise AI clusters. The guidance focuses on measurable engineering trade offs, data pipelines, and operational patterns that reduce downtime and cost.

Background: Evolution from Grid to AI Training Farms

Grid computing established the first scalable model for sharing compute across administrative domains. Engineers designed batch schedulers, shared file systems, and monitoring that assumed relatively static, long-running jobs. Those assumptions shaped hardware management practices that prioritized throughput over rapid fault detection.

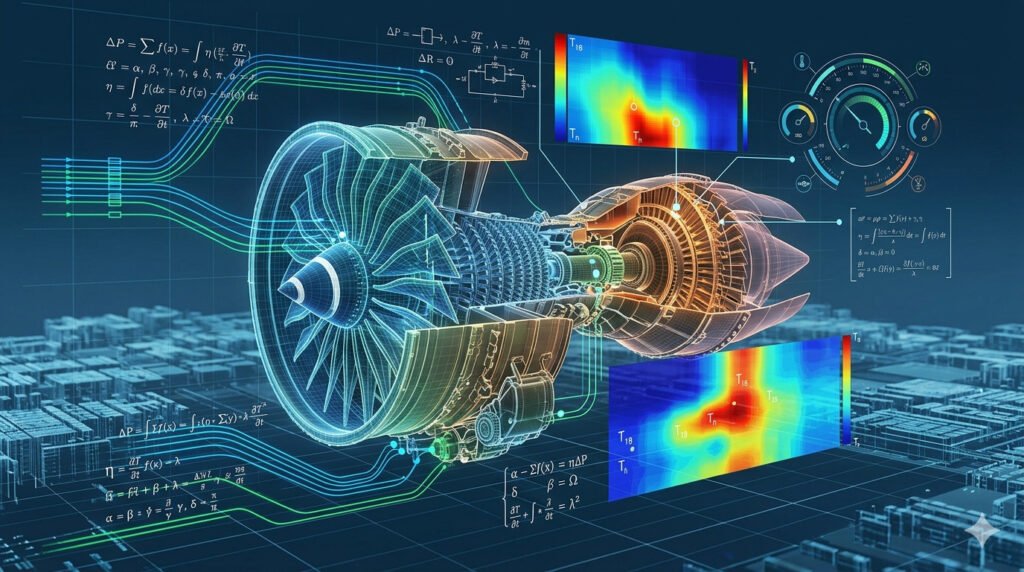

Modern AI training farms impose different constraints. GPU clusters operate at high power density and thermal stress. Training runs last days and consume tens of gigawatts of compute per job. Those operational realities increase failure modes and shorten the mean time between faults for components like power supplies, cooling subsystems, and accelerators.

The industry responded by moving from coarse checklists to continuous telemetry and predictive maintenance. That shift required new data architectures, tighter control loops between sensing and orchestration, and a shift in team responsibilities from reactive repair to proactive risk reduction.

Predictive Hardware Failure Monitoring for AI Farms

Predictive monitoring combines high fidelity telemetry with models that predict component degradation. For AI farms, relevant signals include per-GPU temperature, voltage rail stability, ECC error rates, fan speed, and power delivery metrics. Sampling frequency and resolution determine the signal-to-noise ratio available to models.

Design for scale. Telemetry pipelines must handle millions of metrics per minute while preserving time alignment, sample lineage, and data quality. Engineers must balance retention with storage cost, choosing which raw traces to keep versus aggregating into rollups and features for modeling. Metadata about firmware, workload mix, and rack topology improves model performance.

Finally, integrate predictions into operations. A prediction without action is wasted. Effective systems convert risk scores into precise remediation steps: live migrate workloads, throttle clock rates, schedule hardware replacement, or trigger hardware-level safe shutdowns. Define automated remediation thresholds and human-in-the-loop escalation policies.

Telemetry and Data Engineering for Massive Fleets

Collecting telemetry at scale requires a layered approach. Local agents perform high-frequency sampling and lightweight preprocessing. Edge collectors aggregate and perform initial filtering. Central pipelines handle normalization, enrichment, and storage. This architecture minimizes network costs and avoids central bottlenecks.

A clear schema and time-series alignment matter. Use consistent tags for host, rack, GPU serial, firmware, and workload ID. Store raw events for a rolling window sufficient for training models and retain compressed summaries for compliance and long-term trend analysis. Ensure traceability from a prediction back to original samples.

Comparison of detection contexts

| Dimension | Grid (classic) | Edge | Cloud / On-prem AI Farm |

|---|---|---|---|

| Latency tolerance | High | Low | Low |

| Telemetry volume | Low | Medium | Very high |

| Local processing | Minimal | Required | Required |

| Cost sensitivity | High | Medium | High |

Predictive Models and Evaluation Metrics

Select models that match operational needs. Time series anomaly detectors catch sudden deviations. Degradation models based on survival analysis estimate time to failure. Hybrid approaches that combine rule-based thresholds with learned models improve interpretability and reliability in production.

Focus on actionable metrics. Use precision at specific recall targets, false alarm rate per rack per month, and lead time distribution as primary evaluation measures. These metrics map directly to operational cost: false positives increase unnecessary interventions; missed detections increase outage duration and repair cost.

Robustness practices are essential. Validate models on historical incidents, inject synthetic faults to test sensitivity, and maintain separate model validation pipelines. Monitor model drift by tracking score distributions and re-label training data when hardware or firmware changes alter behavior.

Operational Integration and Runbook Automation

Design clear integration points between prediction outputs and orchestration layers. Predictions should expose confidence intervals and suggested actions rather than binary alerts. Orchestrators can then prioritize live migration, power cycling, or maintenance windows based on business impact.

Automate common remediation while preserving safe human oversight. Implement pre-approved playbooks for common failure classes and gate destructive actions with verification steps. Maintain audit logs that link predictions to executed actions to support post-incident analysis and continuous improvement.

Train operations teams on both the models and their failure modes. Teams should understand why a prediction fires, the costs of each remediation, and the fallback procedures if automation fails. Cross-functional drills reduce mean time to repair and improve trust in predictive systems.

Integrating Grid, Edge, Cloud for Failure Prediction

A mixed infrastructure requires consistent semantics for telemetry and actions. Map identifiers unambiguously across administrative boundaries so that a GPU serial means the same thing at the edge collector, the central model, and the data center management console. That mapping simplifies correlation and root cause analysis.

Federated modeling can reduce data movement while respecting locality. Train local models at the edge or rack level to capture micro-patterns and aggregate parameters centrally. This architecture reduces bandwidth and preserves privacy while leveraging global patterns to improve accuracy on rare failure modes.

Plan for diverse operational constraints. Edge sites may lack spare capacity for live migration. Public cloud instances may not expose low-level telemetry. Build fallback logic that adapts remediation strategies to what the environment supports, and include environmental constraints as features in your models.

Infrastructure Roadmap and FAQ

Operationalizing predictive failure monitoring is a multi-year effort. Prioritize efforts that provide the highest return on downtime reduction and integrate work across firmware, mechanical, and software teams. The roadmap below sequences key milestones.

- Baseline observability: standardize metrics and deploy edge collectors.

- Central pipeline: build scalable ingestion, normalization, and storage.

- Feature engineering: define and compute degradation features.

- Prototype models: validate using historical incidents and synthetic faults.

- Automation: implement safe remediation playbooks and orchestration hooks.

- Scale and federation: deploy models across sites and enable parameter sharing.

- Continuous improvement: implement drift detection and retraining pipelines.

FAQ

Q: What sampling frequency is required for GPU telemetry?

A: Start with 1 Hz for temperature and power rails and 0.1 Hz for fan speed and ECC counters. Increase sampling for transient issues. Adjust based on network and storage constraints.

Q: How do you handle label scarcity for failure events?

A: Use survival analysis, expert rules, and synthetic fault injection. Combine weak supervision and transfer learning from similar hardware generations.

Q: How do you measure model effectiveness operationally?

A: Track precision at fixed recall, false alerts per rack per month, median lead time, and reduction in mean time to repair.

Q: How do you avoid automation-induced outages?

A: Require staged automation with verification steps, circuit breakers, and human escalation paths. Log all actions and maintain rollback procedures.

Conclusion – Predicting Hardware Failure

Predictive hardware failure monitoring reduces downtime in AI training farms when it couples precise telemetry, appropriate modeling, and robust operational integration. The shift from grid-era batch assumptions to continuous, high-density AI infrastructure demands new data pipelines and cross-team processes. Invest in layered telemetry, clear evaluation metrics, and phased automation to lower costs and improve reliability.